Start building with us today.

Buy this course — $99.00Hands-On Distributed Systems Engineering in Go

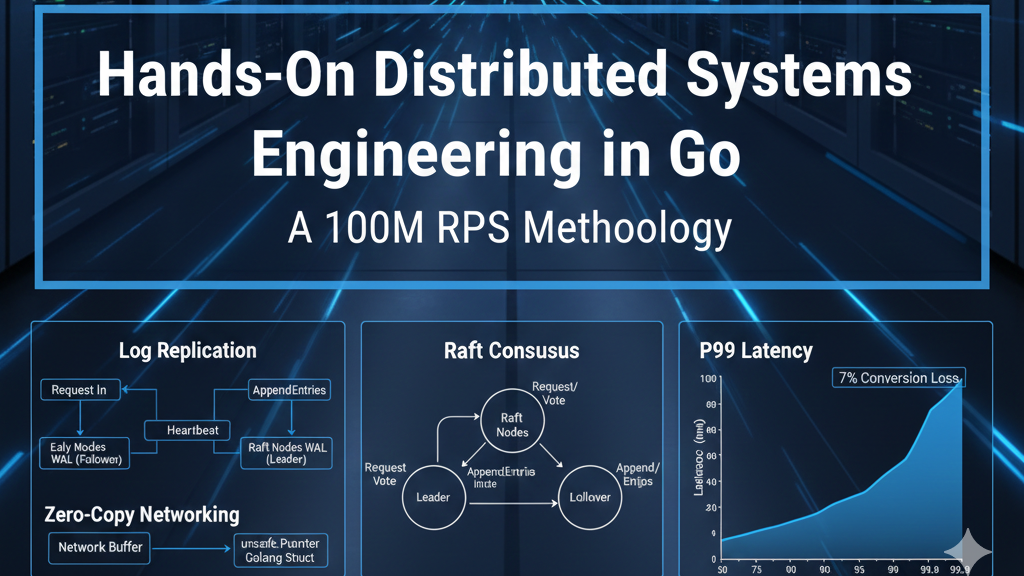

Engineering High-Performance Distributed Systems in Go: A 100M RPS Methodology

The architecture of a system capable of sustaining 100 million requests per second (RPS) represents the pinnacle of modern software engineering. At such a scale, the traditional abstractions of programming languages and operating systems begin to reveal their inherent latencies. The transition from a standard backend service to an ultra-high-throughput engine requires an intimate understanding of the Go runtime, the Linux kernel, and the physical constraints of hardware. This report outlines a comprehensive pedagogical framework for mastering these disciplines through the construction of a distributed, in-memory key-value store.

Why This Course?

The industry demand for engineers who can operate at the limits of hardware is accelerating as data volumes and user expectations for real-time responsiveness grow. Traditional educational paths often focus on the "what" and "how" of software development—CRUD operations, basic API design, and standard database usage—but frequently neglect the "why" regarding performance bottlenecks at the operations-per-second threshold.

This curriculum is designed to solve the "performance paradox" where adding more hardware results in diminishing returns due to lock contention, garbage collection (GC) pauses, and network overhead. By focusing on a distributed key-value store, students engage with every major challenge in distributed systems: consensus, memory management, concurrency, and zero-copy networking. The course moves beyond surface-level optimizations, forcing an investigation into the Go mark-phase CPU utilization, the overhead of the reflect package, and the impact of the speed of light on cross-region quorums.

The economic and technical implications of these optimizations are substantial. In high-load systems, a 50% increase in throughput on the same hardware translates directly to millions of dollars in saved infrastructure costs. Furthermore, reducing P99 latency is not merely a technical vanity metric; it is a critical driver of user retention and conversion, as research indicates that even a one-second delay can reduce conversions by 7%.

What You'll Build

The central project, AionDB, is a distributed, in-memory key-value store engineered for 100M RPS. This is not a toy implementation but a production-grade engine that mirrors the architectural complexities of systems like TiKV and Pebble. The project is subdivided into three core architectural layers.

The first layer is a high-concurrency storage engine. Students will implement a hybrid data structure utilizing concurrent Skip Lists and B+Trees to balance the requirements of wait-free reads and cache-efficient lookups. This engine incorporates manual memory management via the unsafe package and sync.Pool to bypass the common pitfalls of the Go garbage collector when managing large in-memory heaps.

The second layer is a distributed consensus module based on the Raft algorithm. This module ensures strong consistency and fault tolerance across a cluster of nodes. The implementation goes beyond the basic Raft paper, introducing advanced optimizations such as log pipelining, request batching, and lease reads to ensure that consensus does not become the bottleneck for the 100M RPS target.

The final layer is a zero-copy networking stack. By utilizing custom binary protocols and bypassing the standard library's reflection-heavy serialization, the system achieves ultra-low latency communication between nodes and clients. This layer also includes a sophisticated observability suite, integrating distributed tracing and the "Four Golden Signals" to provide a holistic view of the system’s health under extreme stress.

Who Should Take This Course?

This curriculum targets a diverse spectrum of engineering professionals who are dissatisfied with high-level abstractions and seek to master the "magic" occurring beneath the surface.

For fresh graduates, the course provides a rigorous transition from academic theory to the reality of production-grade distributed systems. It replaces idealized assumptions—such as reliable networks and zero-latency communication—with the harsh constraints of real-world infrastructure. For senior software engineers and architects, the course offers a deep dive into the Go runtime's internals, providing the tools needed to squeeze every ounce of performance from a multi-core server.

Engineering managers and product leaders will find value in the sections regarding non-functional requirements (NFRs) and architectural trade-offs. Understanding the PACELC theorem and the impact of P99 latency on business outcomes allows leaders to make informed decisions about feature prioritization and infrastructure spend. Finally, SRE and QA engineers will benefit from the chaos engineering and observability modules, learning how to harden systems against the unpredictable failures inherent in large-scale distributed environments.

What Makes This Course Different?

The primary differentiator of this course is its "no-magic" philosophy. While many Go courses rely on external libraries for networking, serialization, and consensus, this curriculum mandates building these components from the ground up. This approach reveals the nuances of memory allocation, the cost of interface abstractions, and the intricacies of the Go scheduler that are otherwise hidden.

The course also focuses on "non-obvious" insights. For example, it is a common misconception that the best way to optimize a Go program is to reduce the number of allocations. However, in systems with very large heaps, the real bottleneck is often the "live set"—the number of pointers the GC must traverse during the mark phase. The curriculum teaches students to minimize the live set through pointer-free structures and manual memory layouts, a technique rarely discussed in standard Go literature.

Furthermore, the course integrates the psychological and business aspects of performance engineering. It explores the relationship between latency and human cognition, teaching designers and engineers how to support different time scales of perception through UI/UX patterns like optimistic updates and progress indicators.

Key Topics Covered

The curriculum is structured around six technical pillars, each essential for achieving the 100M RPS milestone.

1. Advanced Go Memory Management

Students will master the nuances of the Go memory model, including escape analysis, stack vs. heap allocation, and the internals of the concurrent mark-sweep garbage collector. Special emphasis is placed on the unsafe package for zero-copy casting and manual memory layout, allowing for ![][image2] conversions between binary protocols and internal data structures.

2. High-Concurrency Data Structures

The course provides an empirical comparison of data structures, evaluating the trade-offs between B+Trees (optimized for cache locality and fewer memory transfers) and Skip Lists (optimized for lock-free concurrent modifications). Students will implement sharded mutexes and lock-free algorithms using atomic operations to minimize contention.

3. Distributed Consensus and Consistency

A deep dive into the Raft consensus algorithm is provided, covering leader election, log replication, and safety guarantees. The curriculum also explores the CAP and PACELC theorems, teaching students how to balance the requirements of strong vs. eventual consistency based on specific use cases.

4. Zero-Copy Networking and Serialization

Students will implement binary protocols that outperform standard JSON and gRPC implementations by reducing reflection and allocation overhead. This includes a study of Protobuf, MessagePack, and FlatBuffers, with a focus on their architectural trade-offs.

5. Observability and SRE at Scale

The course covers the implementation of distributed tracing, structured logging, and real-time metrics. Students will learn how to monitor the "Golden Signals" and manage the "long tail" of P99 latency through techniques like request coalescing and load shedding.

6. Chaos Engineering and Hardening

The final pillar focuses on the proactive injection of faults—such as network partitions, CPU saturation, and disk failures—to verify the system's resilience and failover mechanisms.

Prerequisites

Programming Proficiency: Candidates must have a solid grasp of Go’s syntax and idiomatic patterns, particularly interfaces and goroutines.

Operating Systems: A basic understanding of the Linux process model, including memory segments (stack, heap, text) and the virtual memory system.

Computer Networking: Familiarity with the TCP/IP stack, the OSI model, and basic socket programming is required.

Mathematical Maturity: Comfort with big-O notation, logarithmic complexities, and basic probability is necessary for analyzing data structures and implementing probabilistic algorithms.

Course Structure

The course is divided into six phases, each culminating in a significant feature for the AionDB project.

Curriculum and 90 Hands-on Lessons

Phase I: Mastering the Go Runtime and Memory (Lessons 1-15)

Lesson 1: The Quantitative Reality of 100M RPS. Understanding that at this scale, the system has only a few nanoseconds of CPU time per request per core. Establishing the "cycle budget" for a single operation.

Lesson 2: Escape Analysis and the Cost of the Heap. Using compiler flags to visualize where variables are allocated. Learning to design "stack-friendly" code to avoid the overhead of the Go heap.

Lesson 3: The Garbage Collector’s Hidden Cost. Investigating why the GC mark phase—not the sweep phase—is the primary driver of latency in large-memory Go applications.

Lesson 4: sync.Pool Internals and Pitfalls. Implementing object pooling to reduce allocation churn. Understanding the "victim cache" and why pooling is not a silver bullet.

Lesson 5: Manual Memory with the unsafe Package. Mastering unsafe.Pointer and uintptr. Implementing a zero-copy cast from a byte slice to a typed struct.

Lesson 6: Memory Alignment and Padding. Analyzing how struct field ordering affects memory usage. Designing compact structures for high-density in-memory storage.

Lesson 7: Pointer-Free Data Structures. Learning why the GC ignores pointer-free structures and how this can be used to manage multi-gigabyte heaps with sub-millisecond pauses.

Lesson 8: The reflect Package: A Performance Trap. Measuring the overhead of reflection in Go and implementing code-generation alternatives to avoid it at runtime.

Lesson 9: Benchmarking Foundations with go test -bench. Designing scientifically valid benchmarks. Using benchstat to compare results across code versions.

Lesson 10: CPU Profiling and Flamegraphs. Identifying hot paths in the storage engine. Learning to read pprof output to distinguish between user code and runtime overhead.

Lesson 11: Memory Profiling and Allocation Tracking. Using the memory profiler to identify leaks and excessive heap growth. Differentiating between allocspace and inusespace.

Lesson 12: The Architecture of Go Slices and Strings. Understanding the internal reflect.SliceHeader and how to manipulate it to reuse underlying arrays without copying.

Lesson 13: Large Objects and the GC’s "Write Barrier". Investigating the impact of the write barrier on concurrent performance. Learning to minimize pointer updates in hot loops.

Lesson 14: Pre-allocation Strategies. Implementing "Arena" patterns in Go to manage memory in bulk, reducing the number of small, fragmented allocations.

Lesson 15: Module Project: The "StaticKV" Engine. Building a basic in-memory store that uses zero-copy pointers and pre-allocated buffers to achieve maximum local throughput.

Phase II: High-Concurrency and Lock-Free Programming (Lessons 16-30)

Lesson 16: The Physics of Mutex Contention. Measuring the cost of context switching when goroutines block on a lock. Visualizing "lock queues" under high concurrency.

Lesson 17: Sharded Mutexes: The First Step in Scaling. Implementing a map with thousands of concurrent buckets to distribute lock contention across the CPU.

Lesson 18: Atomic Operations and sync/atomic. Learning to use CAS (Compare-And-Swap) and atomic increments. Implementing a wait-free counter for request tracking.

Lesson 19: The Anatomy of a Skip List. Understanding the probabilistic balance of the skip list. Why it is more amenable to concurrent access than a balanced tree.

Lesson 20: Implementing a Concurrent Skip List. Using atomic pointers to link nodes. Handling concurrent insertions and deletions without a global lock.

Lesson 21: B+Trees and Cache Locality. Investigating why B+Trees outperform other trees by reducing memory transfers (O(log n / log B)) and fitting nodes into cache lines.

Lesson 22: sync.Map: The Good, The Bad, and The Ugly. Analyzing the "read" and "dirty" map architecture. Learning the specific workload where sync.Map outperforms a mutex.

Lesson 23: Reader-Writer Mutexes vs. Standard Mutexes. Measuring the overhead of sync.RWMutex for various read/write ratios. Identifying the threshold where RWMutex becomes a bottleneck.

Lesson 24: Lock-Free Queues and Circular Buffers. Implementing a lock-free ring buffer for passing data between ingestion and processing goroutines.

Lesson 25: The "ABA" Problem in Lock-Free Logic. Understanding the primary pitfall of CAS and how to mitigate it with versioning or pointer tagging.

Lesson 26: False Sharing and Cache Line Padding. Learning how multiple goroutines writing to adjacent memory addresses can destroy performance. Implementing padding to isolate hot variables.

Lesson 27: Wait-Free Reads via RCU (Read-Copy-Update). Implementing a pattern where readers never block, and writers create new copies of data to swap atomically.

Lesson 28: Adaptive Sharding and Resizing. Designing a store that can grow its number of shards dynamically as traffic increases, without blocking existing requests.

Lesson 29: Memory Barriers and Reordering. Investigating how the Go compiler and the CPU reorder instructions. Learning when to use atomics to ensure memory visibility.

Lesson 30: Module Project: The "TurboShard" Index. Building a concurrent index that combines sharding, skip lists, and atomic operations to handle 10M+ local operations per second.

Phase III: The Zero-Copy Networking Stack (Lessons 31-45)

Lesson 31: The Overhead of the standard net/http stack. Analyzing the allocation costs of the standard Go HTTP server. Why it is unsuitable for 100M RPS.

Lesson 32: Custom Binary Protocols vs. Text-Based Protocols. Comparing the parsing cost of JSON vs. a fixed-width binary format.

Lesson 33: Protobuf Internals and Optimization. Mastering Protocol Buffers. Learning how to use "pooling" and "recycling" with Protobuf to avoid allocation during marshaling.

Lesson 34: MessagePack as a Drop-in JSON Replacement. Evaluating MessagePack for scenarios where schema flexibility is required but speed is paramount.

Lesson 35: Zero-Copy Deserialization with FlatBuffers. Understanding the "vtable" approach. Accessing binary fields directly from the network buffer without parsing.

Lesson 36: Multiplexing and Frame-Based Protocols. Designing a protocol that allows multiple requests to share a single TCP connection without head-of-line blocking.

Lesson 37: TCP Fast Open and Network Tuning. Adjusting kernel parameters and enabling TFO to reduce the latency of establishing new connections.

Lesson 38: The Thundering Herd and Request Coalescing. Implementing the singleflight pattern to ensure that only one node-to-node request is made for a shared key.

Lesson 39: Probabilistic Early Expiration. Using a biased random coin to refresh hot keys before they expire, preventing a cache stampede.

Lesson 40: Zero-Copy Networking via unsafe and mmap. Learning to map large data files into memory and send their contents to the network without copying them into the application space.

Lesson 41: Implementing a Fast-Path Binary RPC. Building a custom RPC server that utilizes pre-allocated byte buffers and zero-copy headers.

Lesson 42: Batching and Pipelining in Network Calls. Implementing client-side batching to reduce the number of syscalls. Learning how pipelining improves throughput at the cost of complexity.

Lesson 43: Hand-writing Go Assembly for Fast Serialization. Introduction to the Go assembly syntax. Using SIMD instructions to accelerate checksumming or data transformations.

Lesson 44: SIMD Vectorization in Go 1.26. Utilizing the new simd/archsimd package to perform parallel operations on network buffers.

Lesson 45: Module Project: The "ZeroNet" RPC Framework. Constructing a complete client-server RPC system that achieves sub-microsecond serialization and zero allocation.

Phase IV: Distributed Consensus with Raft (Lessons 46-60)

Lesson 46: The Consistency Spectrum: CAP and PACELC. Understanding why consistency and availability are a trade-off during partitions, and why consistency and latency are a trade-off otherwise.

Lesson 47: The Raft Consensus Algorithm: Overview. Studying the Raft paper. Understanding the roles of Leader, Follower, and Candidate.

Lesson 48: Implementing Leader Election. Building the heartbeat mechanism and the randomized election timeout. Handling split-vote scenarios.

Lesson 49: Log Replication and the State Machine. Implementing the AppendEntries RPC. Ensuring that all nodes apply commands in the exact same order.

Lesson 50: Strong Consistency and Linearizability. Understanding the guarantees of strong consistency. Why a system must behave as if there is only a single copy of data.

Lesson 51: Handling Network Partitions and Re-elections. Simulating a partition and verifying that the majority can still function while the minority blocks.

Lesson 52: Optimizing Raft: Batching Proposals. Modifying the Raft core to handle thousands of requests in a single log entry to maximize throughput.

Lesson 53: Optimizing Raft: Log Pipelining. Allowing the leader to send multiple AppendEntries RPCs before receiving an acknowledgment for the first.

Lesson 54: Raft Log Compaction and Snapshots. Implementing the snapshotting mechanism to prevent the WAL from growing infinitely. Transferring snapshots to new nodes.

Lesson 55: Lease Reads: Bypassing the Quorum. Optimizing read performance by allowing the leader to serve reads locally if its lease has not expired.

Lesson 56: Joint Consensus and Configuration Changes. Dynamically adding and removing nodes from the cluster without stopping the consensus process.

Lesson 57: The Multi-Raft Architecture. Implementing sharding at the Raft level. Managing hundreds of independent Raft groups (as in TiKV).

Lesson 58: Pre-Vote and Check-Quorum. Implementing safety optimizations to prevent disruptive re-elections when a node is isolated.

Lesson 59: Distributed Transactions via 2PC. Implementing the Two-Phase Commit protocol on top of Raft to ensure atomicity across multiple shards.

Lesson 60: Module Project: The "RaftKV" Cluster. Integrating the storage engine and consensus module into a three-node cluster that survives any single node failure.

Phase V: Advanced Persistence and LSM-Trees (Lessons 61-75)

Lesson 61: The Write-Ahead Log (WAL) Internals. Implementing an append-only log for durability. Comparing synchronous vs. asynchronous fsync.

Lesson 62: Log-Structured Merge (LSM) Trees. Understanding the trade-off: fast sequential writes vs. slower, multi-layer reads.

Lesson 63: Memtables and SSTables. Implementing the in-memory buffer (Skip List) and the immutable on-disk format for the storage engine.

Lesson 64: Bloom Filters for Faster SSTable Reads. Implementing a probabilistic bitmask to skip reading SSTables that do not contain a specific key.

Lesson 65: Compaction Strategies: Size-Tiered vs. Leveled. Analyzing the trade-offs in write amplification and read latency between different compaction models.

Lesson 66: Value Separation in Pebble. Storing values in separate blob files to reduce the I/O cost of re-writing large values during compaction.

Lesson 67: Hint Files for Accelerated Recovery. Implementing pre-calculated indexes to allow the database to restart in milliseconds by skipping full WAL scans.

Lesson 68: Buffer Cache Management (LRU). Implementing a cache for disk blocks. Understanding why the OS page cache is often not enough for database workloads.

Lesson 69: Mmap and Direct I/O in Go. Mastering the syscall package to perform low-level disk operations that bypass the kernel's overhead.

Lesson 70: Corruption Detection with CRC32. Implementing block-level checksums to ensure data integrity during storage and retrieval.

Lesson 71: Sparse Indexes for SSTables. Designing a search index that fits in memory while pointing to blocks of data on disk.

Lesson 72: Merging V2 and Memory Efficiency. Optimizing the iterator and merge logic to reduce the allocation footprint during range queries.

Lesson 73: Prefix Compression and Key Encoding. Reducing the size of on-disk data by exploiting common prefixes in sorted keys.

Lesson 74: Storage Device Performance Monitoring. Learning to track disk queue depth and I/O wait. Understanding how network-attached storage (EBS) impacts Raft latency.

Lesson 75: Module Project: The "DurableKV" Engine. Finalizing a persistence layer that provides ACID guarantees while maintaining high throughput via sequential I/O.

Phase VI: Observability, SRE, and Production Hardening (Lessons 76-90)

Lesson 76: Monitoring the Four Golden Signals. Implementing real-time tracking of Latency, Traffic, Errors, and Saturation.

Lesson 77: Distributed Tracing with OpenTelemetry. Integrating Trace and Span IDs into the binary protocol to visualize a request as it moves through the cluster.

Lesson 78: Context Propagation in Distributed Go. Mastering the context package to carry deadlines and cancellation signals across RPC boundaries.

Lesson 79: P99 Latency and the "Long Tail". Identifying the causes of tail latency: GC pauses, network jitter, and disk I/O spikes.

Lesson 80: SLOs, SLIs, and Error Budgets. Learning to define measurable targets for uptime and performance. Using error budgets to balance safety and speed.

Lesson 81: Chaos Engineering: The Philosophy of Failure. Learning to proactively inject faults to uncover hidden vulnerabilities in the distributed state.

Lesson 82: Fault Injection with Chaos Mesh. Simulating network partitions, packet loss, and clock drift in a Kubernetes environment.

Lesson 83: CPU and Memory Saturation Tests. Injecting synthetic load to verify that the system’s backpressure and rate-limiting mechanisms function correctly.

Lesson 84: Automated Post-Mortems and RCA. Learning to use trace data and logs to automatically identify the root cause of a latency spike.

Lesson 85: Load Shedding and Adaptive Throttling. Implementing mechanisms to gracefully reject traffic when the system is near its breaking point.

Lesson 86: Managing Multi-Region Deployment. The challenge of geo-routing. Balancing the cost of cross-region quorums with the requirement for regional survivability.

Lesson 87: Canary Deployments and Observability. Using metrics to detect subtle regressions in performance during a rolling update of the cluster.

Lesson 88: The SRE Unified Dashboard. Designing a high-level view of the cluster that correlates deployments, resource usage, and user impact.

Lesson 89: Security and Encryption at Scale. Implementing mTLS and data-at-rest encryption without killing the RPS target. Using hardware acceleration for crypto.

Lesson 90: Capstone Project: The "100M RPS" Challenge. The final test: a global deployment of AionDB subjected to synthetic traffic and a series of chaotic disruptions.

Technical Synthesis: Beyond the Baseline

Reaching the 100M RPS target requires a holistic synthesis of the techniques described above. The performance of a distributed system is rarely limited by a single "slow function"; rather, it is the result of cumulative overhead across the entire stack.

The Memory Hierarchy and the Go Runtime

In a high-scale environment, the Go garbage collector must be viewed not as a utility, but as a resource competitor. The concurrent mark-sweep collector is designed for low latency, but it accomplishes this by stealing CPU cycles from application goroutines during the mark phase.

As illustrated, the primary strategy for 100M RPS is not merely "fewer allocations" but "fewer pointers." By storing keys and values in large, contiguous byte slices and using integer offsets rather than object pointers, the GC is effectively blinded to the data, allowing it to complete its mark phase in microseconds despite a 100GB heap.

Consensus and the Speed of Light

The Raft consensus algorithm is inherently limited by the network's round-trip time (RTT). In a multi-region cluster, the "Impossible Trinity" states that one can achieve any two of the following: low-latency writes, regional survivability, and strong consistency—but not all three simultaneously.

To approach the 100M RPS target while maintaining consistency, AionDB utilizes "Lease Reads." By granting a time-limited lease to the cluster leader, it can serve read requests directly from its local in-memory skip list without performing a quorum check, provided the lease has not expired. This effectively turns the system into a local-speed engine for reads, while still providing strong consistency guarantees for writes.

The Human Element: Latency and Retention

Finally, the technical achievements of the 100M RPS goal must serve a business purpose. Latency is not an abstract metric; it is a direct inhibitor of human cognitive flow.

The observability and P99 management modules of the course ensure that engineers do not just build a "fast" system, but a "predictable" one. Consistency in response time is often more valuable for user trust than a lower average latency marred by high variance.

Conclusion: The Path to Systems Mastery

Mastering the creation of a 100M RPS distributed system requires a shift in perspective. The engineer must move from being a consumer of abstractions to an architect of the underlying machinery. Through the construction of AionDB, students navigate the complex intersections of memory layout, concurrent logic, consensus theory, and network physics. This course provides the rigorous, mentor-driven path necessary to excel at the absolute limits of software performance, preparing engineers to lead the design of the next generation of data-intensive infrastructure.